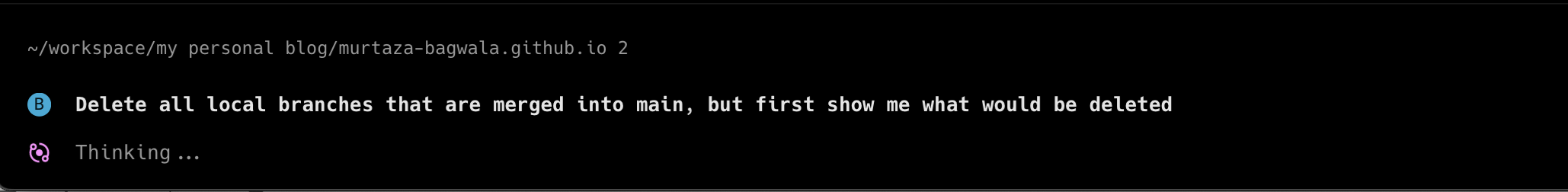

Picture this: a developer hits a bug. Before reading the error message fully, before forming any hypothesis, the ChatGPT tab is already open. A prompt goes in. An answer comes out. The answer gets accepted. The ticket gets closed.

Nothing feels wrong about that workflow. It feels fast. It feels productive. That is exactly the problem.

What cognitive offloading actually is

Cognitive offloading is the practice of using external tools to reduce the mental load of a task. You use a calculator instead of doing arithmetic in your head. You write a to-do list instead of holding seventeen things in working memory. You let GPS handle navigation so you can focus on driving.

This is not laziness. This is how intelligent people have always worked. Every tool humans have ever built, from writing to spreadsheets, is an act of cognitive offloading. The brain gets to focus on harder problems because the easier ones get delegated.

Cognitive offloading is smart. It frees up capacity. It reduces errors. It lets people operate above their natural cognitive ceiling.

What cognitive surrender actually is

Cognitive surrender is something completely different, and the distinction matters more than most people realize.

Cognitive surrender is when a person stops evaluating an answer altogether and simply adopts whatever the AI produces, without scrutiny, without sanity checking, without even a moment of "does this actually make sense?" The tool is no longer extending the person's thinking. It has replaced it.

The difference between the two:

- Cognitive offloading: you delegate a task, you still own the judgment

- Cognitive surrender: you hand over the judgment itself

One is a workflow optimization. The other is an abdication.

The research that makes this impossible to ignore

Researchers at Wharton, Steven D. Shaw and Gideon Nave, published a study in January 2026 titled Thinking: Fast, Slow, and Artificial. Three experiments, 1,372 participants, nearly 10,000 individual reasoning trials.

The findings are striking:

- People followed AI's correct advice 92.7% of the time — expected

- People followed AI's incorrect advice 79.8% of the time — alarming

Nearly 8 out of 10 times, when the AI was wrong, people still went along with it. The study breaks down how participants responded across all trials:

- Cognitive offloading (19.7%): consulted AI but still applied their own reasoning

- Failed overrides (7.1%): tried to think independently but deferred anyway

- Cognitive surrender (73.2%): accepted AI output with zero critical evaluation

73% of interactions ended in full surrender. That is not an edge case. That is the default.

What makes it worse: participants reported higher confidence in their answers after consulting AI, regardless of whether the AI was accurate. People were not just accepting wrong answers. They felt more certain about them.

Why developers need to pay attention

There is a tendency in engineering circles to treat AI tools purely as productivity wins and stop the conversation there. The productivity gains are real. A controlled study found that developers using Copilot completed tasks 55.8% faster. That number is not in dispute.

But the same research found that developers using Copilot saw peer collaboration drop by nearly 80%.

The code review conversations, the architecture debates, the debugging sessions where two people work through a problem together and both come out with a better mental model — those are disappearing. Replaced by a solo loop of prompt and accept.

The issue is not that AI writes code. The issue is what happens when developers accept that code without understanding it. A missed bug is one problem. A missing mental model is a much bigger one — it affects every bug that comes after.

A 2025 study in the Societies journal surveyed 666 participants and found a direct negative correlation between frequent AI tool usage and critical thinking ability. The effect was strongest among younger people — those who have not yet built a deep reasoning foundation before the tools arrived.

The Tri-System brain

Shaw and Nave introduce what they call Tri-System Theory. The familiar dual-process model gives us System 1 (fast, intuitive, automatic) and System 2 (slow, deliberate, analytical). Their argument is that there is now a System 3: artificial cognition operating entirely outside the human brain.

The problem is that System 3 does not feel external. When someone opens a chat interface, receives an answer, and accepts it, the brain registers that as "I figured it out." The cognitive fingerprint is identical to genuine reasoning. The process was not.

This is what makes cognitive surrender so insidious. It does not feel like surrender. It feels like efficiency.

The Google Effect, one level deeper

There is an older version of this problem. The Google Effect, documented in the early 2010s, showed that once people knew they could search for information, they stopped trying to remember it. Memory shifted from content to location — people remembered how to find things, not the things themselves.

AI has taken this one step further. It is not just memory being outsourced now. It is the act of reasoning. The friction of not knowing, sitting with a problem, forming a hypothesis, being wrong and trying again — that entire process is increasingly optional.

That friction is not a bug. It is how understanding is built.

What good AI use actually looks like

This is not an argument against using AI tools. They are genuinely useful, and engineers who ignore them are working at a real disadvantage.

The Wharton researchers themselves recommend what they call architectural solutions rather than motivational ones. The goal is not to tell people to think harder. It is to build verification into the workflow before the AI answer even arrives.

In practice that looks like:

- Form a hypothesis first before prompting. Even a rough one. "I think the issue is in the auth middleware" puts the brain in evaluation mode rather than reception mode.

- Ask why, not just what. Push for tradeoffs, failure cases, alternatives. AI output that cannot survive a follow-up question is a red flag.

- Treat every AI answer as a first draft. The output is a starting point, not a conclusion.

- Take the slow path sometimes. Not every problem needs to be resolved in 90 seconds. The ones worth sitting with often teach the most.

The goal is to keep cognitive offloading without sliding into cognitive surrender. Use the tools to extend what you can do. Do not use them to replace the act of thinking itself.

Why this distinction will matter more over time

The capability you do not practice, you lose. Engineers who stop debugging from first principles gradually lose the skill. Developers who never form a hypothesis before reaching for an answer lose the habit of thinking in hypotheses. People who never disagree with AI output lose their calibration for when something is wrong.

These are not abstract concerns. They are skills that matter most exactly when AI tools fail, produce confidently wrong answers, or are simply not available.

Cognitive offloading makes us more capable. Cognitive surrender makes us dependent. The line between them is whether you are still the one doing the thinking.